There’s a lot of discussion right now around a simple question:

Is RAG still needed?

At first glance, it seems like a fair debate.

If modern models can absorb massive context windows, then maybe we no longer need embeddings, retrieval layers, chunking strategies, vector databases, and all the machinery that defined the last generation of enterprise AI.

Maybe we can just hand the model the whole document — or even a whole set of documents — and let it figure things out.

For some tasks, that is increasingly true.

If you are analyzing a single long report, contract, specification, or technical paper, long context can be a very natural solution. In those cases, retrieval pipelines can feel like unnecessary plumbing.

But once you move from one document to real enterprise work, the debate changes completely.

And that is where the industry is often asking the wrong question.

The question is not:

“Do we still need RAG?”

The better question is:

“What architecture gives an AI system the right information, in the right form, at the right cost, with the right level of trust?”

That is a very different problem.

And it is the problem Empower AI is designed to solve.

Why RAG existed in the first place

RAG became popular because enterprises had a practical problem to solve:

- models are trained on frozen data,

- enterprise knowledge changes constantly,

- internal documents and systems are not part of base model training,

- and users need answers grounded in current, relevant information.

RAG solved part of that problem by doing something very useful: retrieve relevant information first, then generate.

That was a huge step forward.

But RAG was never the full answer. It was one architectural pattern for connecting models to external knowledge.

Now that long context is improving, some people are treating that as a replacement for retrieval.

In reality, long context changes the tradeoffs. It does not eliminate the deeper architectural requirements of enterprise AI.

What long context improves — and where it helps

Long context is genuinely valuable.

It can reduce complexity when:

- the task is bounded,

- the corpus is small,

- the user is working over one or a few long documents,

- and the cost of processing large prompts repeatedly is acceptable.

For single-document analysis, long context can feel much more natural than chunk-and-retrieve.

That’s real progress.

But long context still leaves several fundamental enterprise problems unsolved.

Why long context does not replace enterprise knowledge architecture

1. Scale is still bigger than context

Even very large context windows are tiny compared to real enterprise knowledge.

Organizations do not operate on one product manual, one policy document, or one report.

They operate across:

- thousands of PDFs,

- SOPs,

- field notes,

- CRM histories,

- support cases,

- training materials,

- system-of-record data,

- and constantly changing operational state.

At that scale, some kind of retrieval and selection layer is unavoidable.

2. “Everything in context” is not the same as “the right thing in context”

Just because a model can technically ingest more tokens does not mean it will reliably focus on the right evidence.

Large prompts still create:

- signal dilution,

- prioritization ambiguity,

- version confusion,

- and missed edge-case constraints.

In regulated domains, a buried warning or contraindication is not an inconvenience. It is a failure.

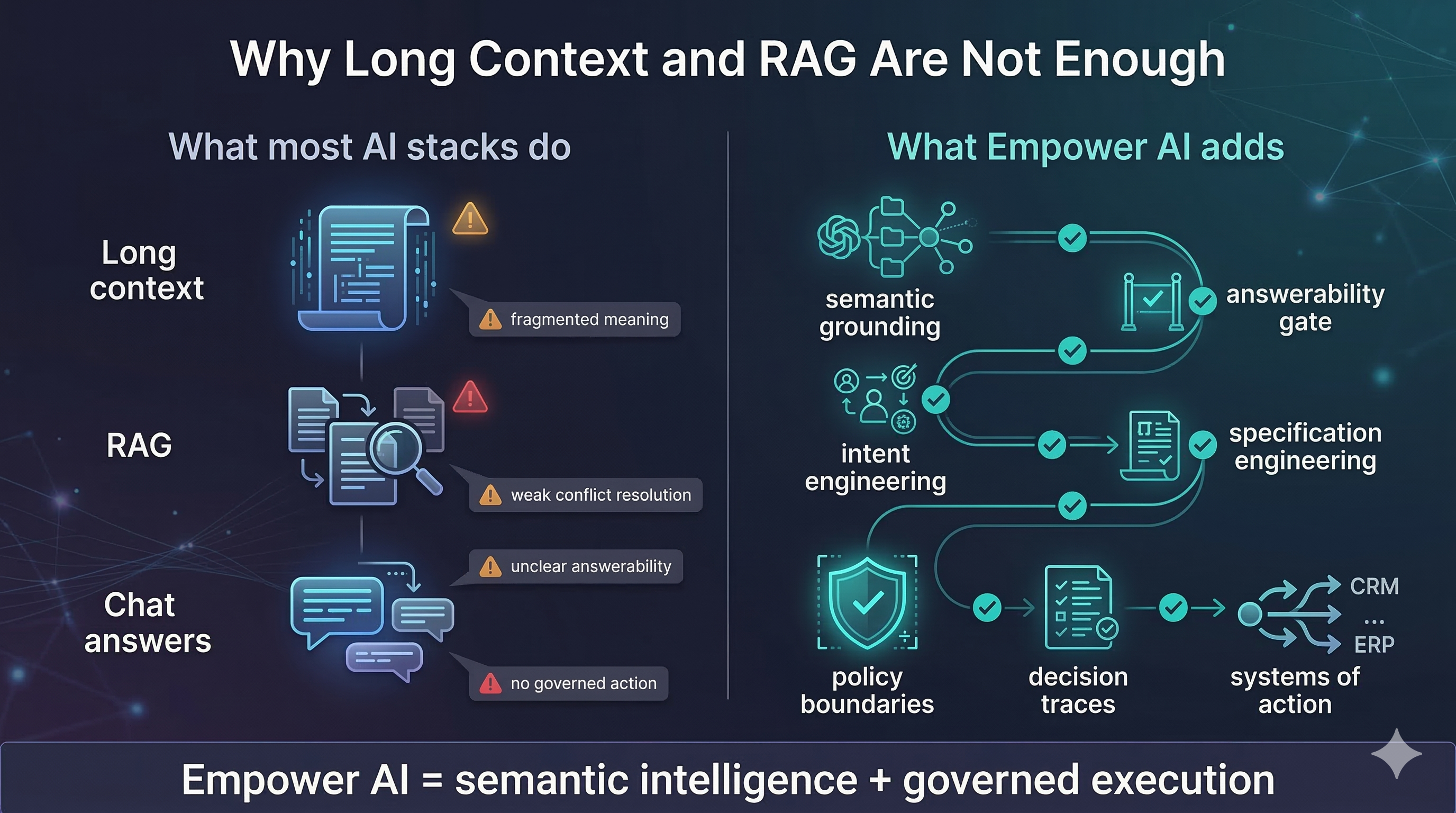

3. Retrieval alone is not enough either

This is the part many discussions miss.

Traditional RAG still has its own limits:

- it often retrieves chunks without shared enterprise meaning,

- it can surface contradictory material without resolving it,

- it does not inherently know whether the system has enough evidence to answer safely,

- and it does not create governed actions.

That means the real future is not RAG, or long context.

The future is a stack that includes both — but goes further.

The real answer: governed knowledge orchestration

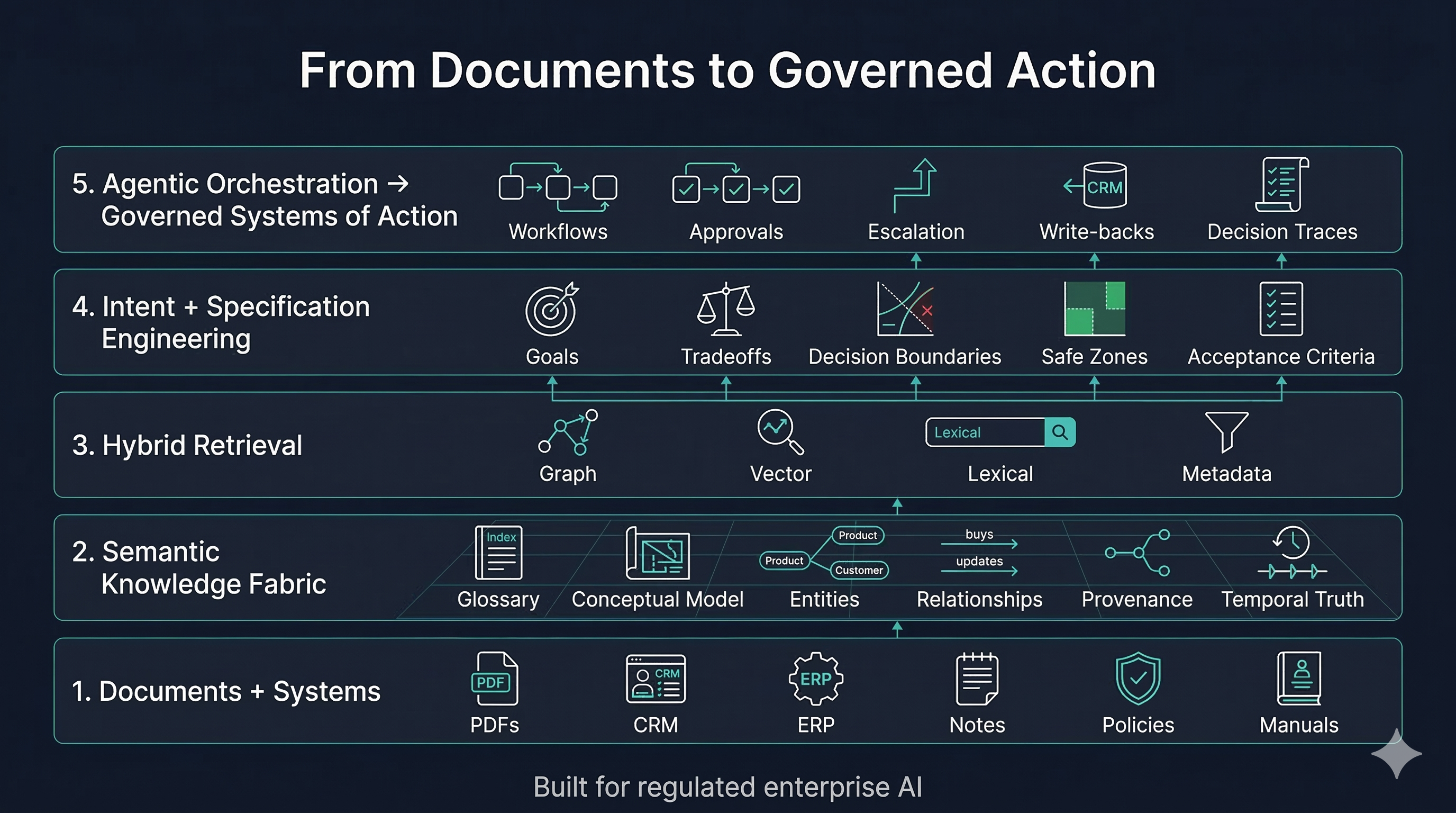

At EnPraxis, we think the real architecture for enterprise AI looks like this:

1. Long context, where appropriate

Use it for bounded, local reasoning over a small set of documents.

2. Hybrid retrieval, where scale demands it

Use vector retrieval, lexical search, metadata filters, and graph-aware retrieval together.

3. A semantic fusion fabric

This is the critical layer.

You do not want AI built on fragmented chunks, isolated bottom-up ontologies, or disconnected data silos.

You want:

- a business glossary,

- a conceptual semantic model,

- governed mappings,

- entity resolution,

- provenance,

- temporal awareness,

- and fact resolution.

This is what turns information into shared meaning.

4. Intent and specification layers

Enterprise AI is not just about what the model knows.

It is also about:

- what the organization wants,

- what tradeoffs matter,

- what boundaries cannot be crossed,

- what constitutes “done,”

- and when to escalate.

That means:

- context engineering tells the AI what to know,

- intent engineering tells it what to optimize,

- specification engineering tells it what a valid outcome looks like.

5. Agentic orchestration

Once the system is grounded and governed, AI can move from answer generation to safe action: workflows, approvals, exception handling, write-backs, documentation, and decision traces.

That is the difference between a system of answers and a system of action.

So, is RAG still needed?

Yes.

But not as a standalone architecture. And not as the center of the story.

RAG is still useful because retrieval is still necessary. Long context is also useful because direct full-document reasoning is sometimes the right move.

The mistake is to think either one is enough.

In serious enterprise settings, especially regulated ones, the winning architecture is:

retrieve + ground + reason + verify + act

That requires:

- hybrid retrieval,

- semantic structure,

- provenance,

- answerability checks,

- policy constraints,

- intent models,

- and agentic execution.

What this means for Empower AI

Empower AI is not a “better RAG” platform.

It is a semantic intelligence and governed execution platform.

It uses:

- long context where it makes sense,

- retrieval where it is necessary,

- and semantic + intent + orchestration layers where trust, safety, and execution matter.

That’s why Empower is built for high-criticality, regulated industries — healthcare, life sciences, MedTech, pharma, financial services, and any environment where the system must do more than produce plausible text.

It must know:

- what it is being asked,

- whether it has enough approved knowledge to answer,

- what policies and constraints apply,

- and whether it should answer, escalate, or act.

That is the real frontier.

Not “Is RAG still needed?”

But: how do we build AI systems that remain connected to enterprise knowledge, organizational intent, and operational reality — safely, repeatably, and at scale?

That is the problem we are solving.

RAG still matters. Long context matters. But governed knowledge orchestration matters more.

Ready to go beyond RAG?

See how Empower AI's semantic fusion fabric and governed orchestration give regulated enterprises the architecture they actually need — not just better retrieval, but grounded, safe, and auditable systems of action.