Platform

Data Loss Prevention for AI and Agentic Workflows

EnPraxis applies policy-aware protection across the full lifecycle of enterprise AI — from ingestion and retrieval to prompting, generation, and agentic action.

The shift

From document protection to governed intelligence protection

Traditional DLP was built for files, endpoints, and network traffic. Modern AI systems introduce new risks: sensitive context entering prompts, data crossing trust boundaries, generated outputs disclosing protected information, and agents taking actions with restricted data.

EnPraxis treats DLP as governed control of sensitive data across the entire AI workflow — not as a narrow file security feature. This includes:

- What data may be ingested

- Who may retrieve it and for what purpose

- What context may be sent to a model

- What can be shown in a response

- What an agent is allowed to do next

In regulated and high-criticality environments, access alone is not enough. The real question is whether data is being used appropriately — for the right purpose, in the right workflow, within the right trust boundary.

How it works

How EnPraxis enforces DLP

EnPraxis makes DLP part of the semantic and agentic fabric itself. Content is classified with meaning, policy is enforced at retrieval and generation time, and outputs and actions are checked before release or execution.

Semantic Classification

Content is classified by meaning, sensitivity, provenance, regulatory type, tenant boundary, and allowed use — not just file labels or keywords.

Policy-Aware Retrieval

Users and agents retrieve only what they are entitled to access, and only what is appropriate for the task and context.

Minimum Necessary Prompting

Prompt context is filtered and minimized so models receive only what is required. Sensitive values can be masked or tokenized, and certain content classes can be blocked from external model submission entirely.

Output and Action Controls

Responses are checked for policy violations, and sensitive actions such as export, sharing, or workflow execution can be gated or escalated.

Full Auditability

Every sensitive interaction can be traced: what was requested, what was retrieved, what was withheld, what was sent to a model, and what action was taken.

Five enforcement zones

DLP across the full AI lifecycle

Every content object carries policy metadata: sensitivity, regulated type, tenant, jurisdiction, provenance, allowed use, and retention rules.

Classification lives in the canonical semantic model — not in ad hoc connector logic — so policy travels with the knowledge.

Before content is retrieved for RAG or GraphRAG, the platform checks identity, role, purpose-of-use, source permissions, and tenant isolation.

The model sees only minimum necessary context. Sensitive values are masked, restricted content is blocked, and model routing ensures some requests go only to private endpoints.

Generated outputs and agent actions pass through a final governance layer. Redaction, suppression, approval, and human-in-the-loop checkpoints are enforced before anything reaches users or systems.

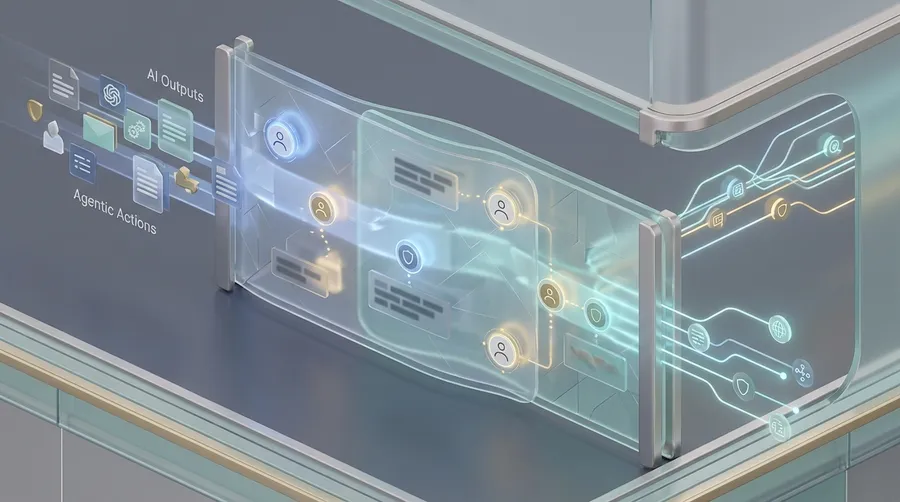

Agent governance

DLP that is agent-aware, not just document-aware

Classic DLP stops files. AI-era DLP must control agent behavior.

In EnPraxis, every agent operates within explicit policy boundaries:

- Each agent has an explicit scope of allowed data classes

- Each skill has permitted actions and sources

- Agents cannot freely combine sources outside policy

- Agents cannot email, export, or copy data unless authorized

- High-risk actions require human-in-the-loop approval

This fits naturally with EnPraxis's governed orchestration approach, where skills and workflows carry their own policy constraints.

Egress controls and trust boundaries

From the customer trust perspective, the question is: what leaves my boundary?

EnPraxis provides configurable controls at every egress point:

- Provider allowlists and private endpoints

- Configurable "no external model" zones

- Configurable "no retention" model policies

- Encryption in transit and at rest

- Customer-managed keys where possible

- Deployment modes: SaaS, private cloud, on-prem, air-gapped

Differentiation

Why EnPraxis is different

Most DLP controls for AI remain shallow: connector permissions, static labels, pattern matching. EnPraxis adds semantic understanding and governed orchestration.

It can distinguish between different kinds of enterprise knowledge, apply policy based on meaning and intended use, and control not just access to information, but how that information may participate in reasoning, generation, and action.

That means understanding:

- This is a reimbursement policy

- This is a clinical claim

- This is internal field guidance

- This is customer-confidential

- This may be summarized for role X but not quoted externally

- This may support answer Y but not action Z

That is how DLP becomes usable for enterprise AI — not just enforceable.

Ready to see it in action?

See how EnPraxis enforces governed data protection across the full AI lifecycle.